The Insight

Memory infrastructure for AI agents

"The graph stays dumb. The agent gets smarter."

Microsoft — Azure SQL team. Worked on the storage engine for Azure SQL Hyperscale. 2 patents.

Meta — Infrastructure (blob storage). Built Dagger: AI task execution system, 200+ autonomous tasks, top 8% of Claude Code users at Meta.

Education — Georgia Institute of Technology (MS) · IIT Hyderabad (BTech)

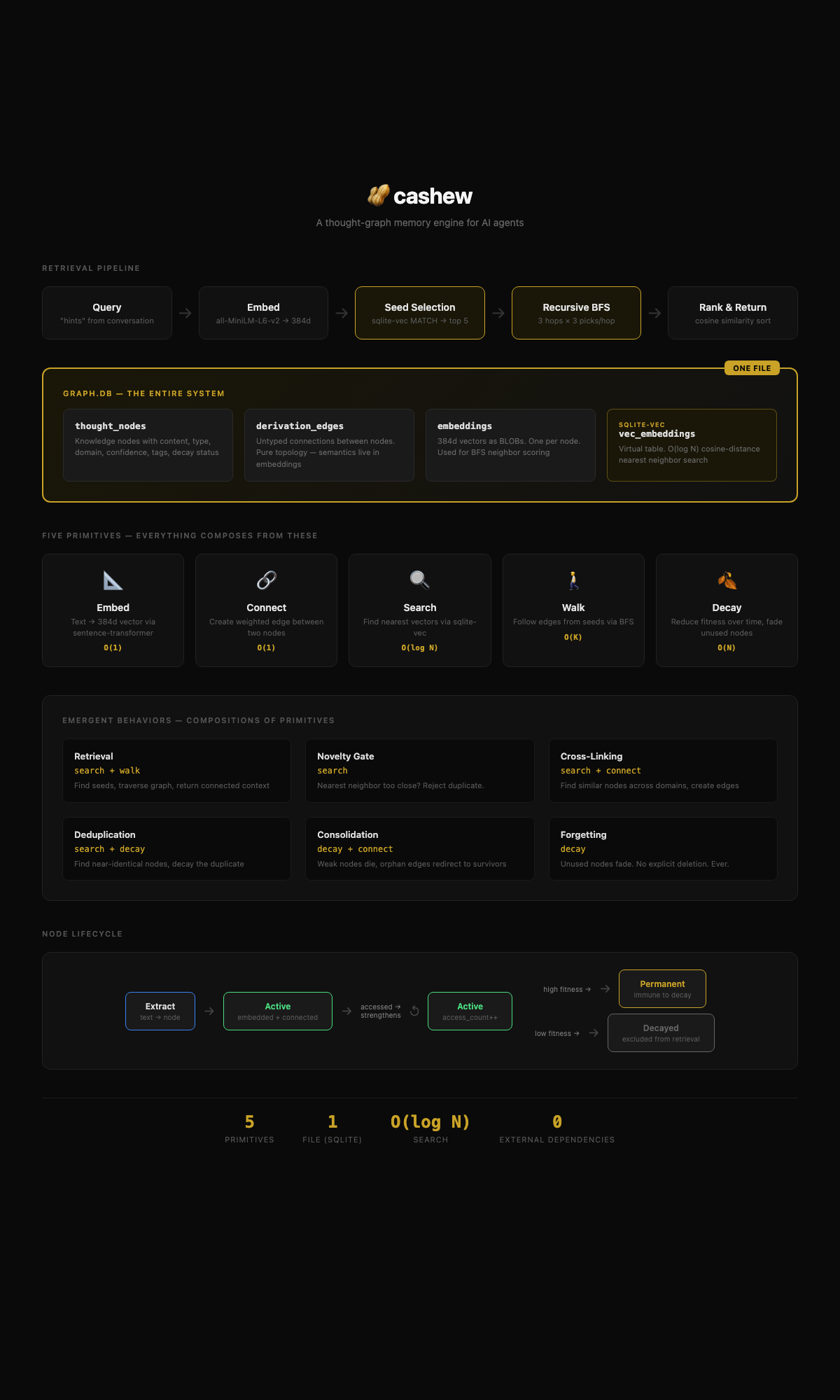

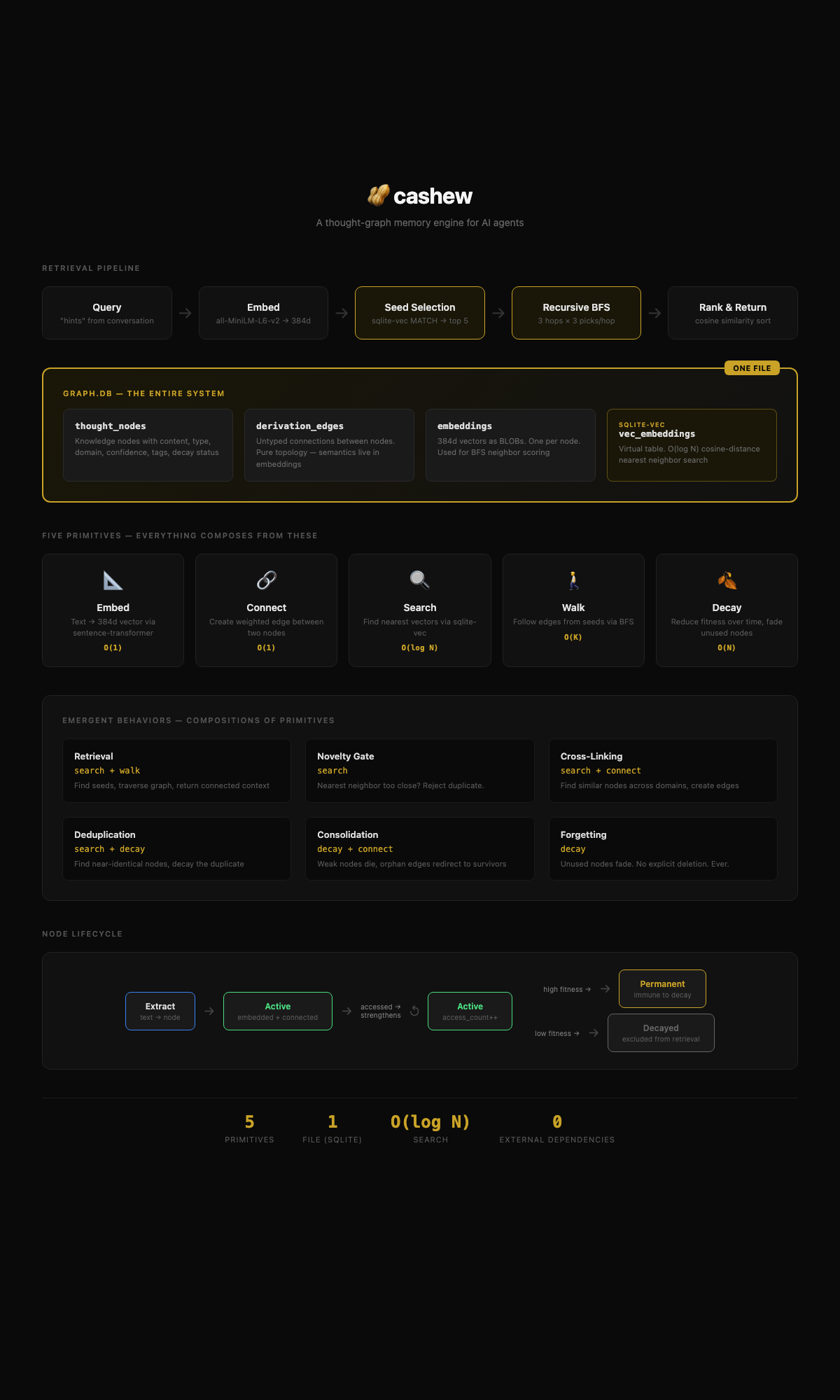

AI agents are goldfish — no memory between sessions

Recall degrades after 10K memories. Similarity search can't capture complex relationships.

O(n) token cost, no filtering. Every memory retrieved costs tokens, whether relevant or not.

Middleware, not architecture. Band-aids on fundamentally broken storage models.

3,200+ nodes across 6 months of daily use. Decisions, preferences, patterns — retrieved in ~50ms. The AI that actually knows you.

Beyond search: why code exists, how components relate, what decisions led here. The graph remembers what documentation never captured.

Multiple agents, separated responsibilities, shared context. Each agent sees its own domain while the graph gives them all the big picture. One memory layer, many agents.

When senior employees leave, their context walks out the door. Cashew captures reasoning patterns, decisions, and domain expertise. The graph stays.

Thousands of conversations, hundreds of deals. Pattern recognition across them is where alpha lives. "This resembles your thesis on X, which failed because Y."

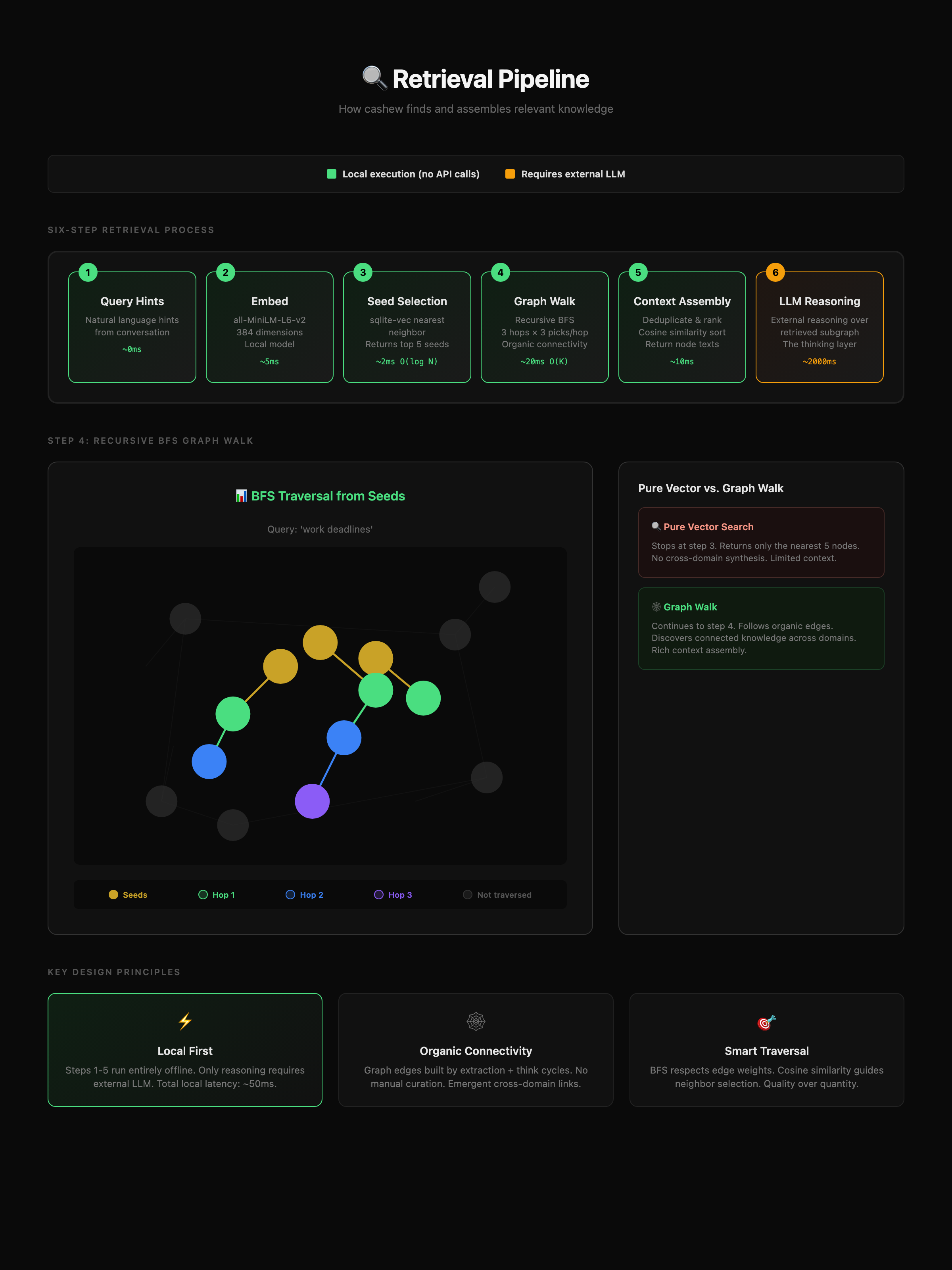

Retrieval: ~50ms local. Only reasoning needs an LLM.

When sleep cycles run, they create nodes that didn't exist before — synthesized from graph structure, not from any single input. The system generates new knowledge, not just consolidation.

Low-value nodes fade naturally. Unlike traditional databases, cashew forgets what isn't important, keeping the graph clean.

Graph walk finds connections vector search can't. Related concepts emerge through graph structure, not just similarity.

Memory infrastructure for the AI agent era

Domain-agnostic: personal today, institutional tomorrow